Abstract

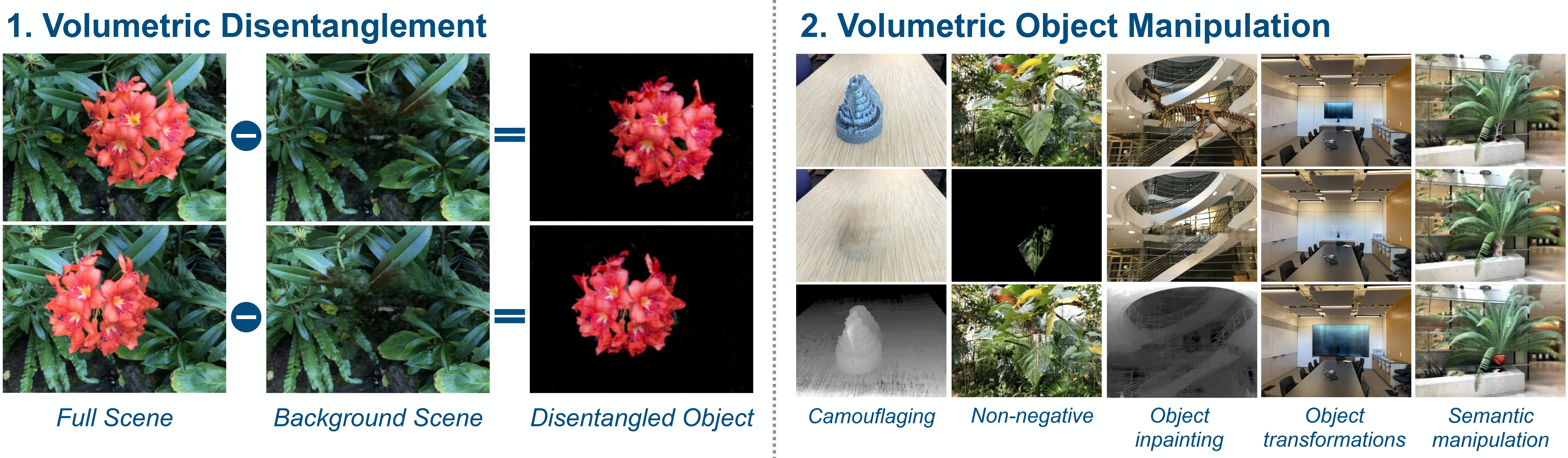

Recently, advances in differential volumetric rendering enabled significant breakthroughs in the photo-realistic and fine-detailed reconstruction of complex 3D scenes, which is key for many virtual reality applications. However, in the context of augmented reality, one may also wish to effect semantic manipulations or augmentations of objects within a scene. To this end, we propose a volumetric framework for (i) disentangling or separating, the volumetric representation of a given foreground object from the background, and (ii) semantically manipulating the foreground object, as well as the background. Our framework takes as input a set of 2D masks specifying the desired foreground object for training views, together with the associated 2D views and poses, and produces a foreground-background disentanglement that respects the surrounding illumination, reflections, and partial occlusions, which can be applied to both training and novel views.

Our method enables the separate control of pixel color and depth as well as 3D similarity transformations of both the foreground and background objects. We subsequently demonstrate the applicability of our framework on a number of downstream manipulation tasks including object camouflage, non-negative 3D object inpainting, 3D object translation, 3D object inpainting, and 3D text-based object manipulation.

TL;DR We propose a framework for disentangling a 3D scene into a forground and background volumetric representations and show a variety of downstream applications involving 3D manipulation.

Results

We demonstrate that we can successfully disentangle the foreground and background volumes. Subsequently, we demonstrate some of the many manipulation tasks this disentanglement enables.

Scene Disentanglement

The following videos correspond the full scene, background scene and the foreground object. The first row corresponds to the RGB output while the second to the disparity.

| Full Scene | Background Scene | Foreground Object |

Object Removal

The following videos correspond to the full scene and background scene with the object removed.

| Full Scene | Background Scene |

Foreground Transformation

Demonstration of object transformation. The first column shows the full scene. The second column shows the background scene without the TV. The third column shows the disentangled foreground TV object. Lastly, the fourth column demonstrates the scene with the enlarged TV.

| Full Scene | Background Scene | Foreground Object | Transformed Scene |

Object Camouflage

Demonstration of object camouflage. The first row corresponds to RGB output while the second to disparity. The first column shows the full scene. The second column shows the background without the fortress object. The third column shows the camouflaged scene. Note how the RGB output resembles that of column 2, while the disparity matches that of the full scene in the first column.

| Full Scene | Background Scene | Camouflaged Foreground Object |

Non Negative Inpainting

Demonstration of non-negative inpainting. In this setting, we are interested in performing non-negative changes to views of the full scene so as to most closely resemble the background volume. This constraint is imposed in optical-see-through devices that can only add light onto an image. The first column shows the full scene. The second column shows the residual 3D scene added to the full scene. The third column shows the resulting scene of adding the residual to the full scene. The fourth column shows the desired output scene (background scene).

| Full Scene | Residual Scene | Inpainted Scene | Background Scene |

Semantic Object Manipulation

Demonstration of 3D semantic editing using a text prompt. (a) The first row, first column shows the full scene. (b) The first row, second column shows the removal of the tree trunk. (c) The first row, second column shows the removal of the window mullion. Note how the occluding tree object was not removed. (d-f) The second row (columns one, two and three) show the semantic manipulation results of the tree trunk using target text prompts. The text prompts used are: "old tree" (d), "aspen tree" (e) and "strawberry" (d).

Citation

Acknowledgement

This research was supported by the Pioneer Centre for AI, DNRF grant number P1.